Russell, S. & Norvig, P. Synthetic Intelligence: A Fashionable Method (Pearson Press, 2021).

Turing, A. M. Computing equipment and intelligence. Thoughts 59, 433–460 (1950).

Google Scholar

Tracy, M., Cerdá, M. & Keyes, Okay. M. Agent-based modeling in public well being: present functions and future instructions. Annu. Rev. Public Well being 39, 77–94 (2018).

Google Scholar

Sridharan, P. & Ghosh, M. Gene expression and agent-based modeling enhance precision prognosis in breast most cancers. Sci. Rep. 15, 17059 (2025).

Google Scholar

Wei, J. et al. Chain-of-thought prompting elicits reasoning in giant language fashions. Adv. Neural Inf. Course of. Syst. 35, 24824–24837 (2022).

Christiano, P. F. et al. Deep reinforcement studying from human preferences. In Advances in Neural Info Processing Techniques Vol. 30 (eds Guyon, I. et al.) (Curran Associates, 2017).

Wang, Y. et al. Reinforcement studying for reasoning in giant language fashions with one coaching instance. Preprint at arXiv (2025).

DeepSeek-AI et al. DeepSeek-V3.2: Pushing the frontier of open giant language fashions. Preprint at arXiv (2025).

Rastogi, A. et al. Magistral. Preprint at arXiv (2025).

LeCun, Y., Bengio, Y. & Hinton, G. Deep studying. Nature 521, 436–444 (2015).

Google Scholar

Burstein, J., Doran, C. & Solorio, T. (eds). BERT: pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Convention of the North American Chapter of the Affiliation for Computational Linguistics: Human Language Applied sciences Vol. 1, 4171–4186 (Affiliation for Computational Linguistics, 2019).

Workshop, B. et al. BLOOM: a 176B-parameter open-access multilingual language mannequin. Preprint at arXiv (2022).

Ji, Z. et al. Survey of hallucination in pure language technology. ACM Comput. Surv. 55, 248:1–248:38 (2023).

Google Scholar

Kalai, A. T., Nachum, O., Vempala, S. S. & Zhang, E. Why language fashions hallucinate. Preprint at arXiv (2025).

Jayaraman, P., Desman, J., Sabounchi, M., Nadkarni, G. N. & Sakhuja, A. A primer on reinforcement studying in drugs for clinicians. NPJ Digit. Med. 7, 337 (2024).

Google Scholar

Sutton, R. S. & Barto, A. Reinforcement Studying: An Introduction (The MIT Press, 2020).

Ouyang, L. et al. Coaching language fashions to comply with directions with human suggestions. In Proceedings of the thirty sixth Worldwide Convention on Neural Info Processing Techniques (eds Koyejo, S. et al.) 27730–27744 (Curran Associates, 2022).

Casper, S. et al. Open issues and basic limitations of reinforcement studying from human suggestions. Trans. Mach. Be taught. Res. (2023).

Skalse, J., Howe, N. H. R., Krasheninnikov, D. & Krueger, D. Defining and characterizing reward hacking. In Proceedings of the thirty sixth Worldwide Convention on Neural Info Processing Techniques (eds Koyejo, S. et al.) 9460–9471 (Curran Associates, 2022).

Uesato, J. et al. Fixing math phrase issues with process- and outcome-based suggestions. Preprint at arXiv (2022).

Lightman, H. et al. Let’s confirm step-by-step. In Proceedings of the twelfth Worldwide Convention on Studying Representations (eds Kim, B. et al.) 39578–39601 (2024).

Guo, D. et al. DeepSeek-R1 incentivizes reasoning in LLMs by reinforcement studying. Nature 645, 633–638 (2025).

Google Scholar

Bai, Y. et al. Constitutional AI: harmlessness from AI suggestions. Preprint at arXiv (2022).

Novikov, A. et al. AlphaEvolve: a coding agent for scientific and algorithmic discovery. Preprint at arXiv (2025).

Gibney, E. DeepMind unveils ‘spectacular’ general-purpose science AI. Nature 641, 827–828 (2025).

Google Scholar

Zhang, J., Hu, S., Lu, C., Lange, R. & Clune, J. Darwin Godel Machine: open-ended evolution of self-improving brokers. Preprint at arXiv (2025).

Rogers, A., Boyd-Graber, J. & Okazaki, N. (eds). In the direction of reasoning in giant language fashions: a survey. In Proceedings of the Findings of the Affiliation for Computational Linguistics: ACL 2023 1049–1065 (Affiliation for Computational Linguistics, 2023).

Hendrycks, D. et al. Measuring mathematical drawback fixing with the MATH dataset. In Proceedings of the Neural Info Processing Techniques Observe on Datasets and Benchmarks Vol. 1 (eds Vanschoren, J. & Yeung, S.) (2021).

Korhonen, A., Traum, D. & Màrquez, L. (eds). Clarify your self! Leveraging language fashions for commonsense reasoning. In Proceedings of the 57th Annual Assembly of the Affiliation for Computational Linguistics 4932–4942 (Affiliation for Computational Linguistics, 2019).

Taylor, R. et al. Galactica: a big language mannequin for science. Preprint at arXiv (2022).

Wang, L. et al. Parameter-efficient fine-tuning in giant language fashions: a survey of methodologies. Artif. Intell. Rev. 58, 227 (2025).

Google Scholar

Fu, Y., Peng, H., Sabharwal, A., Clark, P. & Khot, T. Complexity-based prompting for multi-step reasoning. In Proceedings of the eleventh Worldwide Convention on Studying Representations (eds Liu, Y. et al.) (2023).

Agirre, E., Apidianaki, M. & Vulić, I. (eds). What makes good in-context examples for GPT-3? In Proceedings of Deep Studying Inside Out (DeeLIO 2022): The third Workshop on Information Extraction and Integration for Deep Studying Architectures 100–114 (Affiliation for Computational Linguistics, 2022).

Zhang, Z., Zhang, A., Li, M. & Smola, A. Computerized chain of thought prompting in giant language fashions. In Proceedings of the eleventh Worldwide Convention on Studying Representations (eds Liu, Y. et al.) (2023).

Yao, S. et al. Tree of ideas: deliberate drawback fixing with giant language fashions. In Proceedings of the thirty seventh Convention on Neural Info Processing Techniques Vol. 36 (eds Oh, A. et al.) 11809–11822 (Curran Associates, 2023).

Besta, M. et al. Graph of ideas: fixing elaborate issues with giant language fashions. AAAI 38, 17682–17690 (2024).

Google Scholar

Shojaee, P. et al. The phantasm of considering: understanding the strengths and limitations of reasoning fashions through the lens of drawback complexity. Preprint at arXiv (2025).

Goyal, S. et al. Suppose earlier than you communicate: coaching language fashions with pause tokens. In Proceedings of the twelfth Worldwide Convention on Studying Representations (eds Kim, B. et al.) 27896–27923 (2024).

Inui, Okay., Jiang, J., Ng, V. & Wan, X. (eds). PubMedQA: a dataset for biomedical analysis query answering. In Proceedings of the 2019 Convention on Empirical Strategies in Pure Language Processing and the ninth Worldwide Joint Convention on Pure Language Processing (EMNLP-IJCNLP) 2567–2577 (Affiliation for Computational Linguistics, 2019).

Cobbe, Okay. et al. Coaching verifiers to unravel math phrase issues. Preprint at arXiv (2021).

Ku, L.-W., Martins, A. & Srikumar, V. (eds). Math-Shepherd: confirm and reinforce LLMs step-by-step with out human annotations. In Proceedings of the 62nd Annual Assembly of the Affiliation for Computational Linguistics Vol. 1, 9426–9439 (Affiliation for Computational Linguistics, 2024).

Shinn, N., Cassano, F., Gopinath, A., Narasimhan, Okay. & Yao, S. Reflexion: language brokers with verbal reinforcement studying. In Proceedings of the thirty seventh Worldwide Convention on Neural Info Processing Techniques Vol. 36 (eds Oh, A. et al.) 8634–8652 (Curran Associates, 2023).

Gou, Z. et al. CRITIC: giant language fashions can self-correct with tool-interactive critiquing. In Proceedings of the twelfth Worldwide Convention on Studying Representations (eds Kim, B. et al.) 57734–57811 (2024).

Madaan, A. et al. Self-refine: iterative refinement with self-feedback. In Proceedings of the thirty seventh Worldwide Convention on Neural Info Processing Techniques Vol. 36 (eds Oh, A. et al.) 46534–46594 (Curran Associates, 2023).

Crosby, M., Rovatsos, M. & Petrick, R. Automated agent decomposition for classical planning. In Proceedings of the Worldwide Convention on Automated Planning and Scheduling Vol. 23 (eds Borrajo, D. et al.) 46–54 (2013).

Huang, X. et al. Understanding the planning of LLM brokers: a survey. Preprint at arXiv (2024).

Zhou, D. et al. Least-to-most prompting allows complicated reasoning in giant language fashions. In Proceedings of the eleventh Worldwide Convention on Studying Representations (eds Liu, Y. et al.) (2023).

Xu, B. et al. ReWOO: decoupling reasoning from observations for environment friendly augmented language fashions. Preprint at arXiv (2023).

Yao, S. et al. ReAct: synergizing reasoning and appearing in language fashions. In Proceedings of the eleventh Worldwide Convention on Studying Representations (eds Liu, Y. et al.) (2023).

Shen, Y. et al. HuggingGPT: Fixing AI duties with ChatGPT and its associates in Hugging Face. In Proceedings of the thirty seventh Convention on Neural Info Processing Techniques Vol. 36 (eds Oh, A. et al.) 38154–38180 (Curran Associates, 2023).

Duh, Okay., Gomez, H. & Bethard, S. (eds). ADaPT: as-needed decomposition and planning with language fashions. In Proceedings of Findings of the Affiliation for Computational Linguistics: NAACL 2024 4226–4252 (Affiliation for Computational Linguistics, 2024).

Liu, B. et al. LLM + P: empowering giant language fashions with optimum planning proficiency. Preprint at arXiv (2023).

Feng, P. et al. AGILE: a novel reinforcement studying framework of LLM brokers. In Proceedings of the thirty eighth Worldwide Convention on Neural Info Processing Techniques Vol. 37 (eds Globerson, A. et al.) 5244–5284 (Curran Associates, 2024).

Chang, C. C. et al. Second-generation PLINK: rising to the problem of bigger and richer datasets. Gigascience 4, 7 (2015).

Google Scholar

Pedregosa, F. et al. Scikit-learn: machine studying in Python. J. Mach. Be taught. Res. 12, 2825–2830 (2011).

Hutter, F., Kotthoff, L. & Vanschoren, J. (eds). Automated Machine Studying: Strategies, Techniques, Challenges pp. 151–160 (Springer Worldwide Publishing, 2019).

Hernandez, J. G., Saini, A. Okay., Ghosh, A. & Moore, J. H. The tree-based pipeline optimization device: tackling biomedical analysis issues with genetic programming and automatic machine studying. Patterns 6, 101314 (2025).

Google Scholar

Himmelstein, D. S. et al. Systematic integration of biomedical data prioritizes medicine for repurposing. Elife 6, e26726 (2017).

Google Scholar

Swanson, Okay., Wu, W., Bulaong, N. L., Pak, J. E. & Zou, J. The Digital Lab of AI brokers designs new SARS-CoV-2 nanobodies. Nature 646, 716–723 (2025).

Google Scholar

Schick, T. et al. Toolformer: language fashions can educate themselves to make use of instruments. In Proceedings of the thirty seventh Worldwide Convention on Neural Info Processing Techniques Vol. 36 (eds Oh, A. et al.) 68539–68551 (Curran Associates, 2023).

Lu, P. et al. Chameleon: plug-and-play compositional reasoning with giant language fashions. In Proceedings of the thirty seventh Worldwide Convention on Neural Info Processing Techniques Vol. 36 (eds Oh, A. et al.) 43447–43478 (Curran Associates, 2023).

Patil, S. G., Zhang, T., Wang, X. & Gonzalez, J. E. Gorilla: Massive language mannequin linked with large APIs. In Proceedings of the thirty eighth Worldwide Convention on Neural Info Processing Techniques Vol. 37 (eds Globerson, A. et al.) 126544–126565 (Curran Associates, 2024).

Lewis, P. et al. Retrieval-augmented technology for knowledge-intensive NLP duties. In Proceedings of the thirty fourth Worldwide Convention on Neural Info Processing Techniques (eds Larochelle, H. et al.) 9459–9474 (Curran Associates, 2020).

Petroni, F. et al. How context impacts language fashions’ factual predictions. In Proceedings of the Automated Information Base Development (eds McCallum, A. et al.) (2020).

Fan, W. et al. A survey on RAG assembly LLMs: in the direction of retrieval-augmented giant language fashions. In Proceedings of the thirtieth ACM SIGKDD Convention on Information Discovery and Information Mining (eds Baeza-Yates, R. & Bronchi, F.) 6491–6501 (Affiliation for Computing Equipment, 2024).

Jeong, M., Sohn, J., Sung, M. & Kang, J. Enhancing medical reasoning by retrieval and self-reflection with retrieval-augmented giant language fashions. Bioinformatics 40, i119–i129 (2024).

Google Scholar

Lu, J. et al. MemoChat: tuning LLMs to make use of memos for constant long-range open-domain dialog. Preprint at arXiv (2023).

Zhong, W., Guo, L., Gao, Q., Ye, H. & Wang, Y. MemoryBank: enhancing giant language fashions with long-term reminiscence. In Proceedings of the AAAI Convention on Synthetic Intelligence (eds Wooldridge, M., Dy, J. & Natarajan, S.) 19724–19731 (2024).

Park, J. S. et al. Generative brokers: interactive simulacra of human habits. Preprint at arXiv (2023).

Li, Y. et al. ChatDoctor: a medical chat mannequin fine-tuned on a big language mannequin meta-AI (LLaMA) utilizing medical area data. Cureus 15, e40895 (2023).

Google Scholar

Rasmussen, P., Paliychuk, P., Beauvais, T., Ryan, J. & Chalef, D. Zep: a temporal data graph structure for agent reminiscence. Preprint at arXiv (2025).

Edge, D. et al. From native to international: a graph RAG method to query-focused summarization. Preprint at arXiv (2025).

Zhang, Z. et al. A survey on the reminiscence mechanism of huge language model-based brokers. ACM Trans. Inf. Syst. 43, 155:1–155:47 (2025).

Google Scholar

Yan, B. et al. Past self-talk: a communication-centric survey of LLM-based multi-agent techniques. Preprint at arXiv (2025).

Ku, L.-W., Martins, A. & Srikumar, V. ChatDev: communicative brokers for software program growth. In Proceedings of the 62nd Annual Assembly of the Affiliation for Computational Linguistics Vol. 1, 15174–15186 (Affiliation for Computational Linguistics, 2024).

Hong, S. et al. MetaGPT: Meta programming for a multi-agent collaborative framework. In Proceedings of the twelfth Worldwide Convention on Studying Representations (eds Kim, B. et al.) 23247–23275 (2024).

Zhuge, M. et al. GPTSwarm: language brokers as optimizable graphs. In Proceedings of the forty first Worldwide Convention on Machine Studying Vol. 235 (eds Salakhutdinov, R. R. et al.) 62743–62767 (2024).

Google Cloud. Agent2Agent (A2A) Protocol. a2a-protocol.org/newest/ (2025).

Borghoff, U. M., Bottoni, P. & Pareschi, R. Human-artificial interplay within the age of agentic AI: a system-theoretical method. Entrance. Hum. Dyn. 7, 1579166 (2025).

Google Scholar

Hua, W. et al. Interactive speculative planning: improve agent effectivity by co-design of system and person interface. In Proceedings of the thirteenth Worldwide Convention on Studying Representations (eds Yue, Y. et al) 14256–14283 (2025).

Hou, X., Zhao, Y., Wang, S. & Wang, H. Mannequin Context Protocol (MCP): panorama, safety threats, and future analysis instructions. Preprint at arXiv (2025).

Kuehl, M. et al. BioContextAI is a neighborhood hub for agentic biomedical techniques. Nat. Biotechnol. 43, 1755–1757 (2025).

Google Scholar

Yang, J. et al. SWE-agent: Agent-computer interfaces allow automated software program engineering. In Proceedings of the thirty eighth Worldwide Convention on Neural Info Processing Techniques Vol. 37 (eds Globerson, A. et al.) 50528–50652 (Curran Associates, 2024).

Ferber, D. et al. Growth and validation of an autonomous synthetic intelligence agent for medical decision-making in oncology. Nat. Most cancers 6, 1337–1349 (2025).

Google Scholar

Ku, L.-W., Martins, A. & Srikumar, V. (eds). MedAgents: giant language fashions as collaborators for zero-shot medical reasoning. In Proceedings of the Findings of the Affiliation for Computational Linguistics: ACL 2024 599–621 (Affiliation for Computational Linguistics, 2024).

Tu, T. et al. In the direction of conversational diagnostic synthetic intelligence. Nature 642, 442–450 (2025).

Google Scholar

Li, S. et al. SciLitLLM: adapt LLMs for scientific literature understanding. In Proceedings of the thirteenth Worldwide Convention on Studying Representations (eds Yue, Y. et al.) 56025–56048 (2025).

Wang, Y. et al. Biomedical data retrieval with positive-unlabeled studying and data graphs. In ACM Trans. Intell. Syst. Technol. (ACM, 2024).

Yang, Z., Dabre, R., Tanaka, H. & Okazaki, N. SciCap+: a data augmented dataset to check the challenges of scientific determine captioning. J. Nat. Lang. Course of. 31, 1140–1165 (2024).

Google Scholar

Zhang, S. et al. A multimodal biomedical basis mannequin skilled from fifteen million picture–textual content pairs. NEJM AI 2, AIoa2400640 (2025).

Google Scholar

Qi, B. et al. Massive language fashions as biomedical speculation mills: a complete analysis. In Proceedings of the 1st Convention on Language Modeling (eds. Artzi, Y. et al.) (2024).

Gottweis, J. et al. In the direction of an AI co-scientist. Preprint at arXiv (2025).

Zhang, Y. et al. A complete large-scale biomedical data graph for AI-powered data-driven biomedical analysis. Nat. Mach. Intell. 7, 602–614 (2025).

Google Scholar

Huang, Okay. et al. Automated speculation validation with agentic sequential falsifications. In Proceedings of the forty second Worldwide Convention on Machine Studying Vol. 267 (eds Singh, A. et al.) 25372–25437 (PMLR, 2025).

O’Donoghue, O. et al. BioPlanner: automated analysis of LLMs on protocol planning in biology. In Proceedings of the 2023 Convention on Empirical Strategies in Pure Language Processing (eds Bouamor, H., Pino, J. & Bali, Okay.) 2676–2694 (Affiliation for Computational Linguistics, 2023).

Roohani, Y. et al. BioDiscoveryAgent: an AI agent for designing genetic perturbation experiments. In Proceedings of the thirteenth Worldwide Convention on Studying Representations (eds Yue, Y. et al.) 26417–26466 (2025).

Liu, S. et al. DrugAgent: automating AI-aided drug discovery programming by LLM multi-agent collaboration. In Proceedings of the 2nd AI4Research Workshop: In the direction of a Information-Grounded Scientific Analysis Lifecycle (eds Wang, Q. et al.) (2024).

Ma, M. D. et al. Orchestrating device ecosystem of drug discovery with intention-aware LLM brokers. In In the direction of Agentic AI for Science: Speculation Technology, Comprehension, Quantification, and Validation (eds Koutra, D. et al.) (2025).

Tang, X. et al. CellForge: agentic design of digital cell fashions. Preprint at arXiv (2025).

Turcan, A., Huang, Okay., Li, L. & Zhang, M. J. TusoAI: agentic optimization for scientific strategies. Preprint at arXiv (2025).

Huang, Okay. et al. Biomni: a general-purpose biomedical AI agent. Preprint at bioRxiv (2025).

Lu, C. et al. The AI Scientist: in the direction of absolutely automated open-ended scientific discovery. Preprint at arXiv (2024).

Yamada, Y. et al. Scientist-v2: workshop-level automated scientific discovery through agentic tree search. Preprint at arXiv (2025).

Ferrag, M. A., Tihanyi, N. & Debbah, M. From LLM reasoning to autonomous AI brokers: a complete assessment. Preprint at arXiv (2025).

Yehudai, A. et al. Survey on analysis of LLM-based brokers. Preprint at arXiv (2025).

Geva, M. et al. Did Aristotle use a laptop computer? A query answering benchmark with implicit reasoning methods. Trans. Assoc. Comput. Linguist. 9, 346–361 (2021).

Chan, J. S. et al. MLE-bench: evaluating machine studying brokers on machine studying engineering. In Proceedings of the thirteenth Worldwide Convention on Studying Representations (eds Yue, Y. et al.) 50466–50494 (2025).

Li, Y. et al. Competitors-level code technology with AlphaCode. Science 378, 1092–1097 (2022).

Google Scholar

Jimenez, C. E. et al. SWE-bench: can language fashions resolve real-world Github points? In Proceedings of the twelfth Worldwide Convention on Studying Representations (eds Kim, B. et al.) 54107–54157 (2024).

Chen, Z. et al. ScienceAgentBench: towards rigorous evaluation of language brokers for data-driven scientific discovery. In Proceedings of the thirteenth Worldwide Convention on Studying Representations (eds Yue, Y. et al.) 96934–96990 (2025).

Tian, M. et al. SciCode: a analysis coding benchmark curated by scientists. In Proceedings of the thirty eighth Convention on Neural Info Processing Techniques Datasets and Benchmarks Observe Vol. 111 (eds Globerson, A. et al.) 30624–30650 (Curran Associates, 2024).

Srivastava, A. et al. Past the imitation sport: quantifying and extrapolating the capabilities of language fashions. Trans. Mach. Be taught. Res. (2023).

Jin, D. et al. What illness does this affected person have? A big-scale open area query answering dataset from medical exams. Appl. Sci. 11, 6421 (2021).

Google Scholar

Pal, A., Umapathi, L. Okay. & Sankarasubbu, M. MedMCQA: a large-scale multi-subject multi-choice dataset for medical area query answering. In Proceedings of the Convention on Well being, Inference, and Studying Vol. 174 (eds Flores, G. et al.) 248–260 (PMLR, 2022).

Lou, R. et al. AAAR-1.0: assessing AI’s potential to help analysis. In Proceedings of the forty second Worldwide Convention on Machine Studying Vol. 267 (eds Singh, A. et al.) 40361–40383 (PMLR, 2025).

Webber, B., Cohn, T., He, Y. & Liu, Y. (eds). Truth or fiction: verifying scientific claims. In Proceedings of the 2020 Convention on Empirical Strategies in Pure Language Processing (EMNLP) 7534–7550 (Affiliation for Computational Linguistics, 2020).

Laurent, J. M. et al. LAB-Bench: measuring capabilities of language fashions for biology analysis. Preprint at arXiv (2024).

Bragg, J. et al. AstaBench: rigorous benchmarking of AI brokers with a scientific analysis suite. Preprint at arXiv (2025).

Akhtar, M. et al. Croissant: a metadata format for ML-ready datasets. In Proceedings of the eighth Workshop on Information Administration for Finish-to-Finish Machine Studying (eds Hulsebos, M., Interlandi, M., & Shankar, S.) 1–6 (Affiliation for Computing Equipment, 2024).

Holmes, J. H. et al. Why is the digital well being file so difficult for analysis and medical care? Strategies Inf. Med. 60, 32–48 (2021).

Google Scholar

Chen, Y. & Esmaeilzadeh, P. Generative AI in medical observe: in-depth exploration of privateness and safety challenges. J. Med. Web Res. 26, e53008 (2024).

Google Scholar

European Fee. Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the safety of pure individuals with regard to the processing of non-public knowledge and on the free motion of such knowledge, and repealing Directive 95/46/EC (Normal Information Safety Regulation). (2016).

U.S. Congress. Well being Insurance coverage Portability and Accountability Act of 1996 42 U.S.C. 201 word. (1996).

Science and Expertise Coverage Workplace. Blueprint for an AI invoice of rights: making automated techniques work for the American individuals. (2022).

Das, B. C., Amini, M. H. & Wu, Y. Safety and privateness challenges of huge language fashions: a survey. ACM Comput. Surv. 57, 152:1–152:39 (2025).

Google Scholar

Chen, Z., Xiang, Z., Xiao, C., Tune, D. & Li, B. AgentPoison: red-teaming LLM brokers through poisoning reminiscence or data bases. In Proceedings of the thirty eighth Worldwide Convention on Neural Info Processing Techniques Vol. 37 (eds Globerson, A. et al.) 130185–130213 (Curran Associates, 2024).

Buniello, A. et al. The NHGRI-EBI GWAS Catalog of printed genome-wide affiliation research, focused arrays and abstract statistics 2019. Nucleic Acids Res. 47, D1005–D1012 (2019).

Google Scholar

Benson, D. A. et al. GenBank. Nucleic Acids Res. 41, D36–D42 (2013).

Google Scholar

Husom, E. J., Goknil, A., Shar, L. Okay. & Sen, S. The worth of prompting: profiling power use in giant language fashions inference. Preprint at arXiv (2024).

Maliakel, P. J., Ilager, S. & Brandic, I. Investigating power effectivity and efficiency trade-offs in LLM inference throughout duties and DVFS settings. Preprint at arXiv (2025).

Jiang, P., Sonne, C., Li, W., You, F. & You, S. Stopping the immense enhance within the life-cycle power and carbon footprints of LLM-powered clever chatbots. Engineering 40, 202–210 (2024).

Google Scholar

Li, P., Yang, J., Islam, M. A. & Ren, S. Making AI much less ‘thirsty’. Commun. ACM 68, 54–61 (2025).

Google Scholar

Zhang, H., Ning, A., Prabhakar, R. B. & Wentzlaff, D. LLMCompass: enabling environment friendly {hardware} design for giant language mannequin inference. In Proceedings of the 2024 ACM/IEEE 51st Annual Worldwide Symposium on Pc Structure (ISCA) (eds Vega, A. et al.) 1080–1096 (IEEE, 2024).

Barocas, S., Hardt, M. & Narayanan, A. Equity and Machine Studying: Limitations and Alternatives (The MIT Press, 2023).

Chang, C. T. et al. Crimson teaming ChatGPT in drugs to yield real-world insights on mannequin habits. NPJ Digit. Med. 8, 149 (2025).

Google Scholar

Chen, R. J. et al. Algorithmic equity in synthetic intelligence for drugs and healthcare. Nat. Biomed. Eng. 7, 719–742 (2023).

Google Scholar

Omar, M. et al. Sociodemographic biases in medical resolution making by giant language fashions. Nat. Med. 31, 1873–1881 (2025).

Google Scholar

OECD. Well being Information Governance for the Digital Age: Implementing the OECD Advice on Well being Information Governance (OECD Publishing, 2022).

Zhang, C. et al. A survey on federated studying. Knowl.-Based mostly Syst. 216, 106775 (2021).

Google Scholar

Li, R., Romano, J. D., Chen, Y. & Moore, J. H. Centralized and federated fashions for the evaluation of medical knowledge. Annu. Rev. Biomed. Information Sci. 7, 179–199 (2024).

Google Scholar

Pan, M. Z. et al. Why do multiagent techniques fail? In Proceedings of the ICLR 2025 Workshop on Constructing Belief in Language Fashions and Functions (eds Goldblum, M. et al.) (2025).

Matsumoto, N. et al. ESCARGOT: an AI agent leveraging giant language fashions, dynamic graph of ideas, and biomedical data graphs for enhanced reasoning. Bioinformatics 41, btaf031 (2025).

Google Scholar

Romano, J. D. et al. The Alzheimer’s Information Base: a data graph for Alzheimer illness analysis. J. Med. Web Res. 26, e46777 (2024).

Google Scholar

Lobentanzer, S. et al. A platform for the biomedical utility of huge language fashions. Nat. Biotechnol. 43, 166–169 (2025).

Google Scholar

Lobentanzer, S. et al. Democratizing data illustration with BioCypher. Nat. Biotechnol. 41, 1056–1059 (2023).

Google Scholar

Zhou, J. et al. Massive language fashions in biomedicine and healthcare. NPJ Artif. Intell. 1, 44 (2025).

Google Scholar

Gulcehre, C. et al. Strengthened Self-Coaching (ReST) for language modeling. Preprint at arXiv (2023).

Gabriel, I., Keeling, G., Manzini, A. & Evans, J. We’d like a brand new ethics for a world of AI brokers. Nature 644, 38–40 (2025).

Google Scholar

Lee, H.-P. (Hank) et al. The impression of generative AI on vital considering: self-reported reductions in cognitive effort and confidence results from a survey of data employees. In Proceedings of the 2025 CHI Convention on Human Elements in Computing Techniques (eds Yamashita, N. et al.) 1–22 (Affiliation for Computing Equipment, 2025).

Del Rio-Chanona, R. M., Ernst, E., Merola, R., Samaan, D. & Teutloff, O. AI and jobs. A assessment of principle, estimates, and proof. Preprint at arXiv (2025).

Becker, J., Rush, N., Barnes, E. & Rein, D. Measuring the impression of early-2025 AI on skilled open-source developer productiveness. Preprint at arXiv (2025).

SIMA Staff et al. Scaling instructable brokers throughout many simulated worlds. Preprint at arXiv (2024).

Gao, S. et al. Democratizing AI scientists utilizing ToolUniverse. Preprint at arXiv (2025).

Qu, Y. et al. CRISPR-GPT for agentic automation of gene-editing experiments. Nat. Biomed. Eng. (2025).

Google Scholar

Bran, A. M. et al. Augmenting giant language fashions with chemistry instruments. Nat. Mach. Intell. 6, 525–535 (2024).

Google Scholar

Wang, H. et al. SpatialAgent: an autonomous AI agent for spatial biology. Preprint at bioRxiv (2025).

Ghafarollahi, A. & Buehler, M. J. ProtAgents: protein discovery through giant language mannequin multi-agent collaborations combining physics and machine studying. Digit. Discov. 3, 1389–1409 (2024).

Google Scholar

Yuksekgonul, M. et al. Optimizing generative AI by backpropagating language mannequin suggestions. Nature 639, 609–616 (2025).

Google Scholar

Yang, Y. et al. TwinMarket: a scalable behavioral and social simulation for monetary markets. In Proceedings of the ICLR 2025 Workshop on World Fashions: Understanding, Modelling and Scaling (eds Yang, M. et al.) (2025).

Hu, S., Lu, C. & Clune, J. Automated design of agentic techniques. In Proceedings of the thirteenth Worldwide Convention on Studying Representations (eds Yue, Y. et al.) 21344–21377 (2025).

Chiruzzo, L., Ritter, A. & Wang, L. (eds). EvoAgent: in the direction of automated multi-agent technology through evolutionary algorithms. In Proceedings of the 2025 Convention of the Nations of the Americas Chapter of the Affiliation for Computational Linguistics: Human Language Applied sciences Vol. 1, 6192–6217 (Affiliation for Computational Linguistics, 2025).

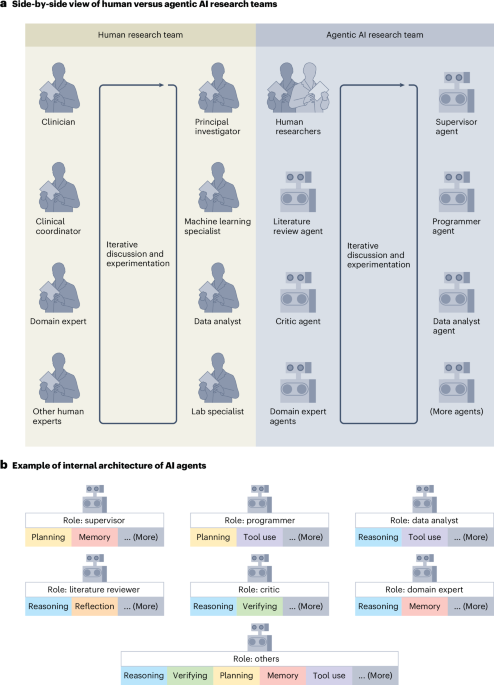

Gao, S. et al. Empowering biomedical discovery with AI brokers. Cell 187, 6125–6151 (2024).

Google Scholar

Ahdritz, G. et al. OpenFold: retraining AlphaFold2 yields new insights into its studying mechanisms and capability for generalization. Nat. Strategies 21, 1514–1524 (2024).

Google Scholar

Leave a Reply