What’s a GPU?

Very merely talking, a graphics processing unit (GPU) is a particularly highly effective number-cruncher.

Much less merely: a GPU is a form of pc processor constructed to carry out many easy calculations on the identical time. The extra acquainted central processing unit (CPU) is alternatively constructed to carry out a smaller variety of difficult duties rapidly and to change between duties effectively.

To attract a scene on a pc display screen, as an example, the pc should determine the color of tens of millions of pixels a number of instances each second. A 1920 x 1080 display screen has 2.07 million pixels per body. At a body price of 60 per second, you can be updating greater than 120 million pixels per second. Every pixel’s color may even rely on lighting, textures, shadows, and the ‘materials’ of the article.

That is an instance of a activity the place the identical steps are repeated time and again for a lot of pixels — and GPUs are designed to do that higher than CPUs.

Think about you’re a instructor and you could verify the reply papers for a whole faculty. You possibly can end it over a couple of days. However if in case you have the assistance of 99 different academics, every instructor can take a small stack and you may all wrap up in an hour. A GPU is like having a whole lot and even hundreds of such employees, referred to as cores. Whereas every core received’t be as highly effective as a CPU core, the GPU has a lot of them and may thus full massive repetitive workloads quicker.

How does a GPU do what it does?

When a videogame needs to point out a scene, it sends the GPU a listing of objects described utilizing triangles (most 3D fashions are damaged down into triangles). The GPU then runs a sequence referred to as a rendering pipeline, consisting of 4 steps.

(i) Vertex processing: The GPU first processes the vertices of every triangle to determine the place they need to seem on the display screen. This makes use of maths with matrices (kind of like organised tables of numbers) to rotate objects, transfer them, and apply the digicam’s perspective.

(ii) Rasterisation: After the GPU is aware of the place every triangle lands on the display screen, it fills within the triangle by deciding which pixels it covers. This step primarily converts the geometry of triangles into pixel candidates on the display screen.

(iii) Fragment or pixel shading: For every pixel-like fragment, the GPU determines the ultimate color. It might search for a texture (e.g. a picture wrapped on the article), calculate the quantity of lighting based mostly on the course of a lamp or the solar, apply shadows, and add results like reflections.

(iv) Writing to border buffer: The completed pixel colors are written into an space of reminiscence referred to as the body buffer. The show system reads the buffer and renders it on the display screen.

Small pc packages referred to as shaders carry out the calculations required for these steps. The GPU runs the identical shader code on many vertices or many pixels in parallel.

Successfully the GPU reads and writes very massive quantities of knowledge — together with 3D fashions, textures, and the ultimate picture — rapidly, which is why many GPUs have their very own devoted reminiscence referred to as VRAM, brief for video RAM. VRAM is designed to have excessive bandwidth, that means it might transfer numerous information out and in per second. Nonetheless, to keep away from having to fetch the identical information, the GPU additionally incorporates smaller, quicker reminiscence within the type of caches and preparations for shared reminiscence, with the purpose of protecting reminiscence entry from turning into a bottleneck.

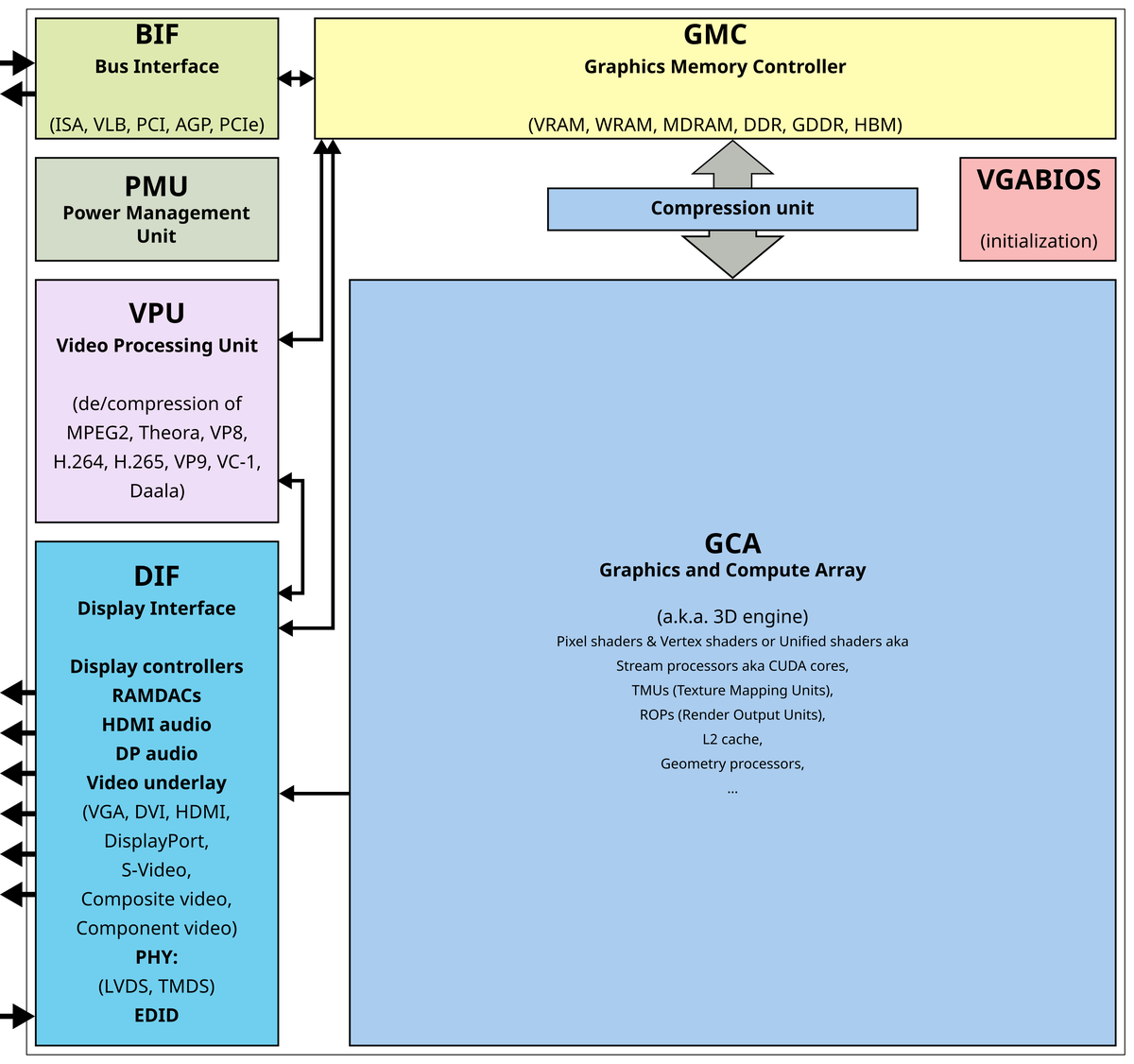

A mock diagram of a GPU as present in graphics playing cards.

| Picture Credit score:

Public area

Many duties outdoors graphics additionally contain performing the identical sort of calculation on massive arrays of numbers, together with machine studying, picture processing, and in simulations (e.g. pc fashions that simulate rainfall).

The place is the GPU situated?

A chip is a flat piece of silicon, referred to as the die, with a hard and fast floor space measured in sq. mm.

In a pc, the GPU is just not a separate layer that sits beneath the CPU; as an alternative it’s simply one other chip, or set of chips, mounted on the identical motherboard or on a graphics card and wired to the CPU with a high-speed connection.

In case your pc has a separate graphics card, the die holding the GPU can be beneath a flat metallic warmth sink in the midst of the cardboard, surrounded by a number of VRAM chips. And the entire card will plug into the motherboard. Alternatively, in case your laptop computer or smartphone has ‘built-in graphics’, it probably means the GPU and the CPU are on the identical die.

That is widespread in fashionable systems-on-a-chip, that are principally packages containing completely different chip sorts that traditionally used to return in separate packages.

Are GPUs smaller than CPUs?

GPUs usually are not smaller than CPUs within the sense of utilizing some basically smaller form of electronics. In reality, each use the identical form of silicon transistors made with related fabrication nodes, e.g. the 3-5 nm class. GPUs differ in how they use the transistors, i.e. they’ve a unique microarchitecture, together with what number of computing items there are, how they’re linked, how they run directions, how they entry reminiscence, and so forth. (E.g. the ‘H’ in Nvidia H100 stands for the Hopper microarchitecture.)

CPU designers commit numerous the die’s space to complicated management logics, the cache ( auxiliary reminiscence), and options that enhance the chip’s efficiency and skill to make selections quicker. A GPU alternatively will ‘spend’ extra space on many repeating compute blocks and really vast information paths, plus the {hardware} required to help these blocks, reminiscent of reminiscence controllers, register recordsdata, show controllers, sensors, on-chip networks, and so forth.

In consequence, GPUs — particularly the high-end ones — typically have extra complete transistors than many CPUs, and so they aren’t essentially extra densely packed per sq. mm. In reality, high-end GPUs are sometimes very massive. Some GPU packages additionally place dynamic RAM very near the GPU die, linked utilizing brief wires with excessive bandwidth. Basically, the structure of elements wants to make sure the GPU can switch massive volumes of knowledge rapidly.

Why do neural networks use GPUs?

Neural networks — mathematical fashions with a number of layers that be taught patterns from information and make predictions — can run on CPUs or GPUs, however engineers want GPUs as a result of the networks run many duties in parallel and transfer numerous information.

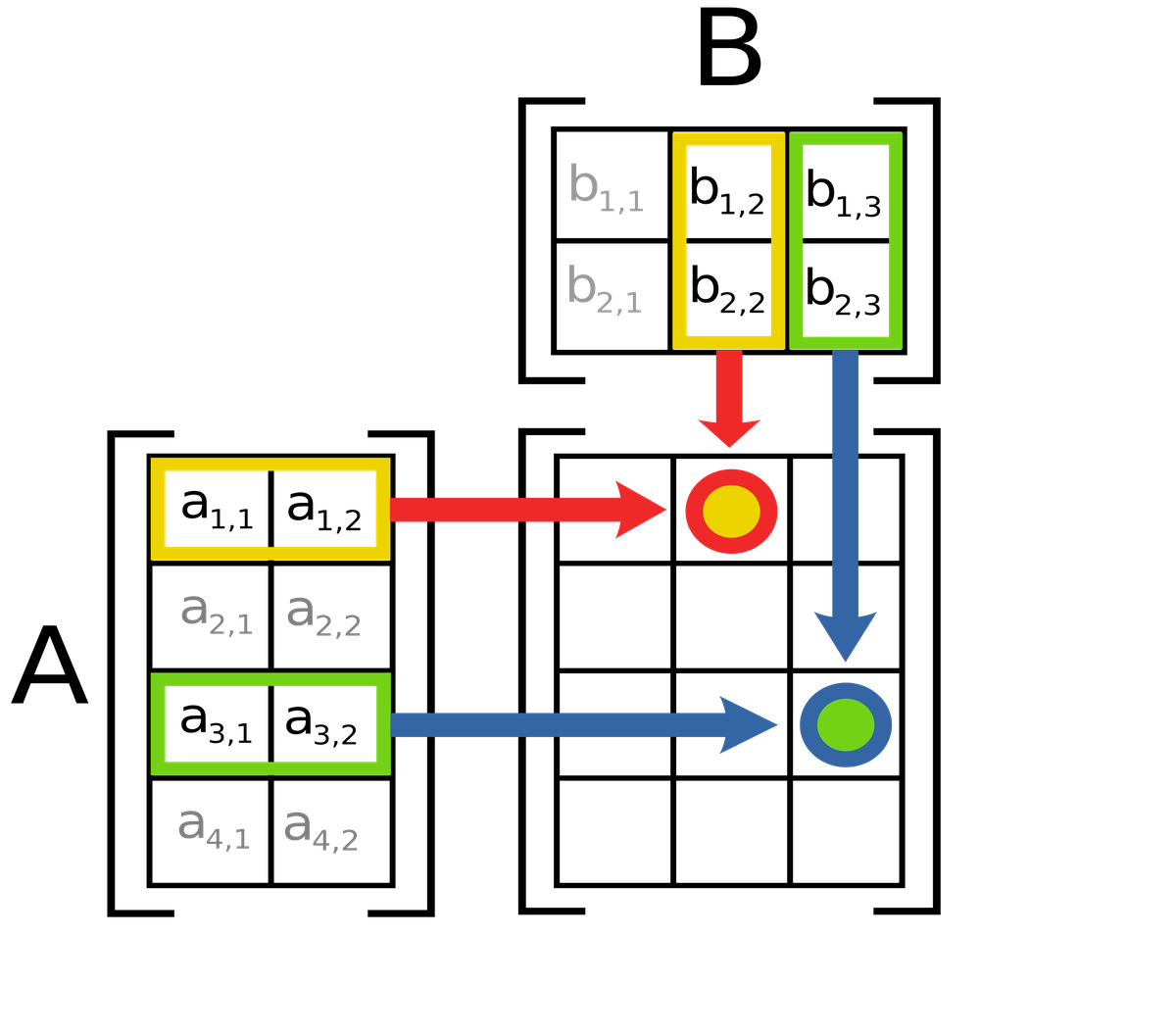

The maths of neural networks is within the type of matrix and tensor operations. Matrix operations are calculations on two-dimensional grids of numbers, like rows and columns; the numbers in every grid can characterize varied properties of a single object. The important downside is to multiply two grids to get a brand new grid. Tensor operations are the identical thought however use higher-dimensional grids, like 3D or 4D arrays. That is helpful when the neural community is processing pictures, as an example, which have extra properties of curiosity than, say, a sentence.

In matrix multiplication, the worth of c12 (red-yellow circle) is the same as a11b12 + a12b22. Likewise, the worth of c33 (blue-green circle) is the same as a31b13 + a32b23.

| Picture Credit score:

Lakeworks (CC BY-SA)

A neural community repeatedly provides and multiplies matrices and tensors. Because it’s the identical set of mathematical guidelines, simply utilized on completely different numbers, the hundreds of cores of a GPU are excellent for the job.

Second, modern neural networks can have tens of millions to billions of parameters. (A parameter is a discovered weight or bias worth contained in the community.) So along with doing the maths, the community additionally has to have the ability to transfer information quick sufficient — and GPUs have very excessive reminiscence bandwidth.

Many GPUs additionally embrace tensor cores, that are designed to multiply matrices extraordinarily quick. For instance, the NVIDIA H100 Tensor Core GPU can carry out round 1.9 quadrillion operations per second of tensor operations referred to as FP16/BF16.

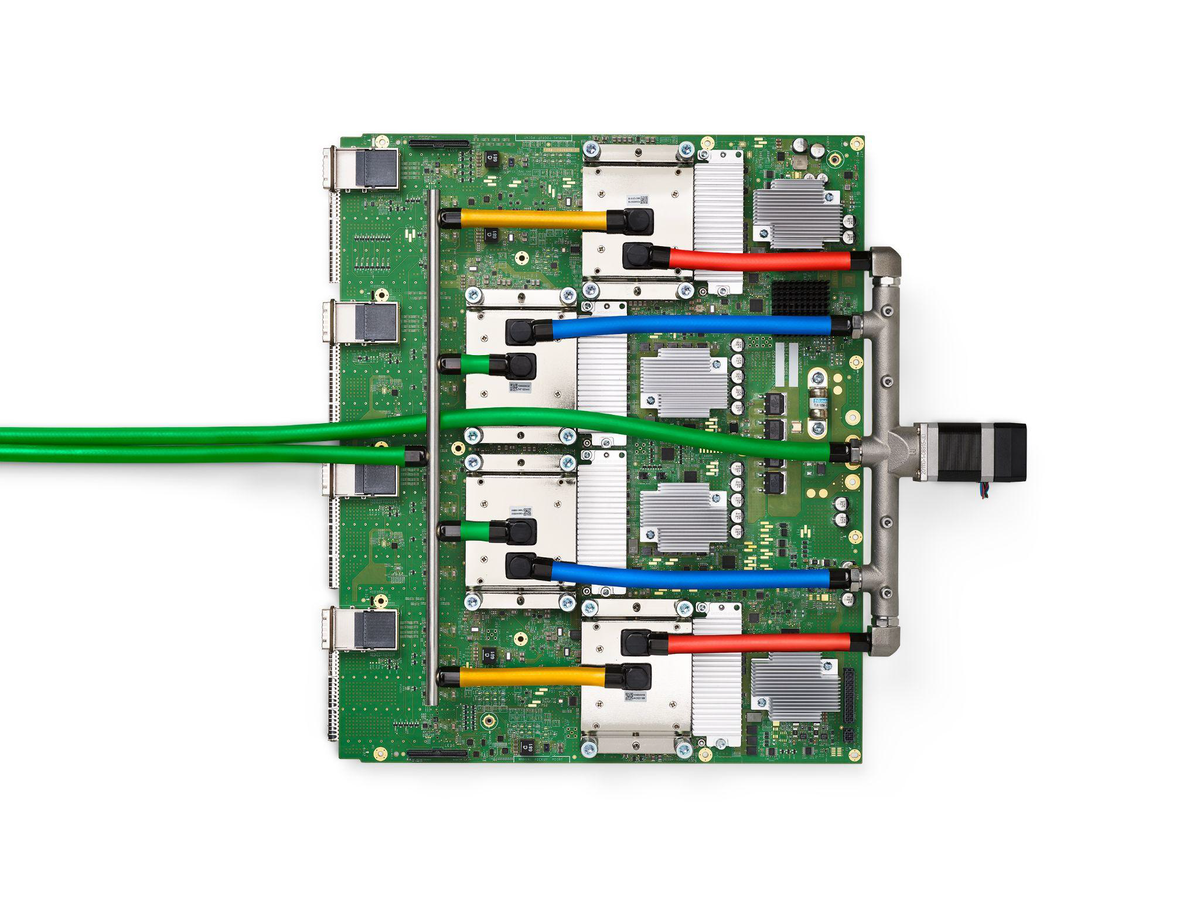

In reality, Google developed chips referred to as Tensor Processing Items (TPUs) to effectively run the maths that neural networks require.

The inexperienced board every thing is mounted on is the printed circuit board. The 4 flat, silver metallic blocks organized in a vertical column close to the center are liquid-cooled packages. The inexperienced hoses and the colored tubes are coolant traces to and from the packages. Every bundle incorporates a TPU v4 chip surrounded by 4 high-bandwidth reminiscence stacks. 4 connectors dot the board’s left edge.

| Picture Credit score:

arxiv:2304.01433

How a lot vitality do GPUs devour?

Let’s use a hypothetical instance the place 4 GPUs are used to coach a neural community to foretell the chance of some illness for an individual (based mostly on age, BMI, blood markers, some historical past). Then the identical community is put in use.

Every GPU is an Nvidia A100 PCIe, whose board energy is round 250 W throughout coaching. The GPUs are almost totally used throughout coaching. The coaching length is 12 hours.

The vitality consumed throughout coaching can be 12 kWh and through use, round 2 kWh (assuming just one GPU offers the inferences). The server may even devour energy for its CPUs, RAM, storage, followers, and networking, and a few energy can be misplaced. It’s typical so as to add 30-60% of the GPU energy for these wants. So the overall consumption can be round 6 kWh/day for the community to run repeatedly.

That’s like working an AC for 4 to 6 hours at full compressor energy, a water heater for round three hours or 60 small LED bulbs for 10 hours a day.

Does Nvidia have a monopoly on GPUs?

Nvidia technically doesn’t have a monopoly on GPUs; it enjoys a near-complete dominance in some markets and is a really robust market energy in synthetic intelligence (AI) computing platforms.

In discrete GPUs offered to be used in private computer systems, business trackers have reported that Nvidia has roughly 90% market share at the very least, with AMD and Intel making up a lot of the relaxation). As for GPUs utilized in information centres, Nvidia’s place is strengthened by {hardware} efficiency and provide and the CUDA software program ecosystem.

CUDA is Nvidia’s software program platform to run general-purpose computation (like processing a sign or analysing information) on Nvidia GPUs. In consequence, switching away from utilizing Nvidia GPUs additionally means altering software program, which corporations don’t love to do. In reality, many patrons take into account Nvidia GPUs working CUDA software program to be the default platform for coaching and utilizing neural networks at scale.

The authorized definition of monopoly relies on whether or not a agency can management costs or exclude the competitors and whether or not it maintains that energy by means of illegal conduct. This is the reason, as an example, European regulators have been investigating whether or not Nvidia makes use of its dominance to lock clients in, primarily by tying or discounting GPU costs when patrons additionally take Nvidia software program or associated elements.

mukunth.v@thehindu.co.in

Leave a Reply