Deepfake fraud has gone “industrial”, an evaluation revealed by AI specialists has mentioned.

Instruments to create tailor-made, even personalised, scams – leveraging, for instance, deepfake movies of Swedish journalists or the president of Cyprus – are not area of interest, however cheap and simple to deploy at scale, mentioned the evaluation from the AI Incident Database.

It catalogued greater than a dozen current examples of “impersonation for revenue”, together with a deepfake video of Western Australia’s premier, Robert Cook dinner, hawking an funding scheme, and deepfake docs selling pores and skin lotions.

These examples are a part of a development by which scammers are utilizing extensively out there AI instruments to perpetuate more and more focused heists. Final yr, a finance officer at a Singaporean multinational paid out practically $500,000 to scammers throughout what he believed was a video name with firm management. UK shoppers are estimated to have misplaced £9.4bn to fraud within the 9 months to November 2025.

“Capabilities have all of the sudden reached that degree the place faux content material will be produced by just about anyone,” mentioned Simon Mylius, an MIT researcher who works on a mission linked to the AI Incident Database.

He calculates that “frauds, scams and focused manipulation” have made up the most important proportion of incidents reported to the database in 11 of the previous 12 months. He mentioned: “It’s turn out to be very accessible to a degree the place there’s actually successfully no barrier to entry.”

“The size is altering,” mentioned Fred Heiding, a Harvard researcher learning AI-powered scams. “It’s turning into so low-cost, nearly anybody can use it now. The fashions are getting actually good – they’re turning into a lot sooner than most specialists suppose.”

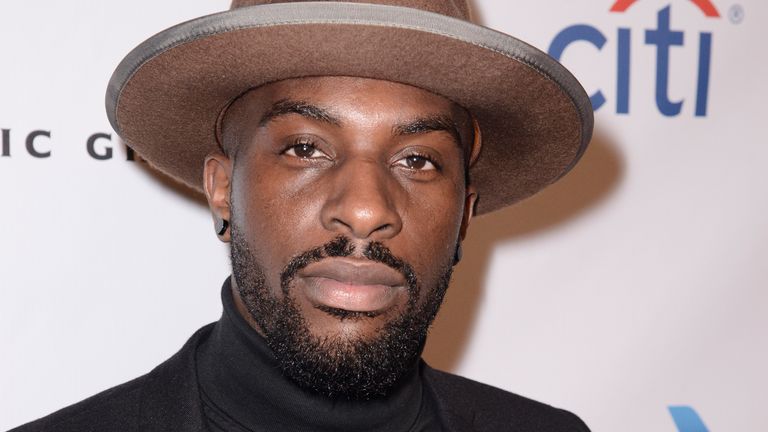

In early January, Jason Rebholz, the chief government of Evoke, an AI safety firm, posted a job provide on LinkedIn and was instantly contacted by a stranger in his community, who advisable a candidate.

Inside days, he was exchanging emails with somebody who, on paper, seemed to be a gifted engineer.

“I regarded on the resume and I used to be like, that is truly a very good resume. And so I believed, regardless that there have been some crimson flags, let me simply have a dialog.”

Then issues grew to become unusual. The candidate’s emails went on to spam. His resume had quirks. However Rebholz had handled uncommon candidates earlier than and determined to go forward with the interview.

Then, when Rebholz took the decision, the candidate’s video took practically a minute to look.

“The background was extraordinarily faux,” he mentioned. “It simply regarded tremendous, tremendous faux. And it was actually struggling to cope with [the area] across the edges of the person. Like a part of his physique was coming out and in … After which after I’m his face, it’s simply very mushy across the edges.”

Rebholz went by with the dialog, not desirous to face the awkwardness of asking the candidate immediately in the event that they had been, in reality, an elaborate rip-off. Afterwards, he despatched a recording of it to a contact at a deepfake detection agency, who advised him that the video picture of the candidate was AI-generated. He rejected the candidate.

Rebholz nonetheless doesn’t know what the scammer needed – an engineering wage, or commerce secrets and techniques. Whereas there have been reviews of North Korean hackers making an attempt to get jobs at Amazon, Evoke is a startup, not an enormous participant.

“It’s like, if we’re getting focused with this, everybody’s getting focused with it,” mentioned Rebholz.

Heiding mentioned the worst was forward. At present deepfake voice cloning know-how is great – making it simple for scammers to impersonate, for instance, a grandchild in misery over the cellphone. Deepfake movies, then again, nonetheless have room for enchancment.

This might have excessive penalties: for hiring, for elections, and for broader society. Heiding added: “That’ll be the massive ache level right here, the entire lack of belief in digital establishments, and establishments and materials on the whole.”

Leave a Reply